OpenAI’s sudden discontinuation of the Sora application in March 2026 leaves a significant gap in the market, but digital strategists can generate professional AI video for free online using platforms like Google Veo 3.1, Kling 3.0, and Luma Dream Machine.

These alternative applications offer generous daily or monthly free credit tiers, enabling production teams to produce 4K cinematic clips, maintain strict character consistency, and export high-quality assets for commercial campaigns without committing to an expensive paid subscription.

The Guardian recently examined why the abrupt platform closure occurred — their findings are worth reading in full.

Production teams must adapt creative workflows immediately to maintain a competitive edge in an increasingly visual digital landscape.

Relying on a single, closed-ecosystem application exposes corporate brands to unnecessary operational risk when sudden corporate pivots occur or beta platforms are permanently sunsetted.

Research from Wyzowl confirms that 91% of businesses now use video as a primary marketing tool — the full 2026 report on video marketing statistics is available on their website.

Integrating robust, free artificial intelligence alternatives ensures marketing pipelines remain uninterrupted and highly scalable across global markets.

Marketing directors can seamlessly pivot to robust platforms that offer distinct advantages, such as native audio synchronisation or superior fluid dynamics, depending on specific campaign requirements.

To understand how to leverage these visual assets across digital touchpoints, organisations must analyse why online video marketing is essential.

Understanding the underlying economics of generative media helps technical leads navigate this volatile software market with greater confidence and strategic foresight.

Training and running foundation models requires astronomical compute power, forcing developers to ruthlessly prioritise enterprise software over experimental consumer applications. Forbes recently examined why AI and automation are dominating every customer experience conversation — their insights into generative AI infrastructure are worth reading in full.

The Abrupt Discontinuation of OpenAI’s Sora

OpenAI officially discontinued the Sora application and its developer API on 24 March 2026, citing a strategic refocus on robotics and enterprise solutions.

Operating a high-fidelity video generation platform costs an estimated $15 million per day, making the consumer-facing app financially unsustainable ahead of the company’s anticipated initial public offering.

A 2026 study by The Wall Street Journal found that OpenAI is actively cutting side quests to consolidate its core coding products — you can explore their strategic analysis methodology directly on their platform.

The collapse of a landmark $1 billion licensing deal with The Walt Disney Company served as the final catalyst for this high-profile product termination. Disney had originally planned to license over 200 proprietary characters, including those from the Marvel and Star Wars universes, for users to animate within the OpenAI ecosystem.

BBC News recently examined why this lucrative media partnership failed to materialise —(https://www.bbc.com/news/).

Severe copyright backlash from creative unions and international broadcasters heavily influenced the boardroom decision to pull the plug on the project. Film studios threatened extensive litigation over unlicensed training data, whilst the Japan Commercial Broadcasters’ Association warned that the tool could irreparably damage regional content ecosystems.

Corporate strategists must recognise that frontier technology companies will consistently prioritise lucrative business-to-business tools over resource-heavy creative experiments.

This harsh economic reality dictates that agencies should diversify their software stack rather than building an entire production pipeline around a single, venture-backed video model.

The Financial Times recently examined why generative AI gross margins remain structurally challenged — their financial analysis of foundation models is worth reading in full.

Case Study: The Disney Ecosystem Pivot

In late 2025, Disney executives anticipated integrating Sora-generated content directly into the Disney Plus platform to streamline internal storytelling workflows.

When OpenAI abruptly halted the project, Disney immediately pivoted to evaluating open-source models and alternative platforms that offered stronger intellectual property protections and data governance.

Reuters recently examined why Disney shifted its focus toward proprietary internal models — their reporting on the $1 billion equity stake withdrawal is worth reading in full.

Why Did OpenAI Shut Down Sora?

The fundamental computing architecture required to process pixel-level video generation proved far too inefficient for mass consumer deployment.

Technical researchers discovered that predicting visual frames consumed vastly more graphical processing units than calculating logical text strings, rendering the financial model unviable for a free application.

Research from the University of California confirms that pixel-level world models face insurmountable scaling barriers — the comprehensive academic report on visual generation limits is available via their research portal.

Competitor platforms aggressively closed the technological gap during the months Sora spent in closed beta testing and restricted preview phases.

By the time the standalone application launched to the public, alternatives like Runway Gen-4 and Kling 3.0 were already producing comparable two-minute cinematic clips with established user bases.

MindStudio outlines the current guidance on how competitors overtook OpenAI’s video lead in detail, noting that models like Kling were already producing two-minute clips.

The application also presented an insurmountable content moderation nightmare that drained internal resources and invited intense regulatory scrutiny. Identifying and blocking deepfake material, violent imagery, and copyright-protected characters in real-time video generation proved far more complex than filtering text-based chatbot outputs.

A 2026 study by Copyleaks found that harmful manipulated media easily bypassed Sora’s initial safety guardrails — you can explore their deepfake detection methodology directly on their platform.

Internal corporate restructuring at OpenAI further sidelined the video project as leadership focused on deploying a unified “superapp” integrating ChatGPT, Codex, and browser functionalities.

Sam Altman directed engineering teams to abandon peripheral projects and concentrate entirely on artificial general intelligence and physical robotics applications.

Business Today recently examined why OpenAI abandoned creators to focus on coders — their investigation into the company’s enterprise pivot is worth reading in full.

The UK Regulatory Landscape for AI Video Production

Navigating the synthetic media space requires a firm grasp of the UK’s evolving copyright frameworks and stringent data protection regulations.

The House of Lords Communications and Digital Committee published a definitive report in March 2026 establishing a strict licensing-first approach for generative artificial intelligence developers.

A 2026 study by the UK Parliament found that unregulated generative models pose a clear and present danger to the £124 billion creative sector — you can explore their legislative methodology within the official committee release.

Marketing agencies cannot rely on platforms that train their models on unlicensed intellectual property if they intend to use the outputs for commercial UK campaigns.

The government officially rejected proposed text and data mining exceptions, meaning developers must secure explicit permission before ingesting copyrighted material for model training.

The Hogan Lovells legal team outlines the current guidance on artificial intelligence copyright enforcement in detail, explaining why the government officially rejected proposed text and data mining exceptions.

Using synthetic tools to replicate a real person’s likeness or voice without consent now carries significant legal and reputational risk for commercial brands.

The UK government is currently developing enhanced statutory protections against unauthorised digital replicas and “in the style of” outputs to safeguard individual personality rights.

Osborne Clarke recently examined why these specific deepfake labelling requirements are accelerating across Europe — their regulatory insights into the EU AI Act are worth reading in full.

When selecting a free video generator, compliance officers must prioritise platforms that guarantee commercial safety and transparent data provenance.

Tools trained on ethically sourced or licensed datasets protect advertising agencies from downstream copyright infringement claims and protect client reputations from public backlash.

Research from Lewis Silkin confirms that businesses face increasing liability for deploying unlicensed media — the full legal report on AI training data transparency is available via their portal.

Case Study: Public Sector Compliance Standards

A regional UK government council needed to produce a series of public health announcement videos but faced strict procurement rules regarding copyright compliance.

By utilising Adobe Firefly’s commercially safe model rather than an unregulated open-source generator, the council successfully launched the campaign while adhering to all statutory data provenance guidelines. DLA Piper outlines the current guidance on public sector intellectual property obligations in detail, highlighting how the rejection of broad data mining exceptions protects rightsholders.

The Evolving Economics of Video Marketing in 2026

The financial imperative to adopt synthetic generation tools stems from the unprecedented demand for video content across all digital marketing channels.

B2B marketing benchmarks demonstrate that companies allocating substantial budgets to visual media see revenue growth accelerate nearly fifty percent faster than those relying solely on text.

Research from Wyzowl confirms that 91% of businesses now use video as a core marketing channel — the full statistical breakdown of commercial video adoption is available for review.

Producing high-quality footage traditionally requires prohibitive expenditure on location scouting, professional equipment rentals, cast salaries, and extensive post-production editing.

Synthetic generation entirely removes these logistical bottlenecks, allowing single-person marketing teams to output agency-grade visual assets from a standard laptop interface.

A 2026 study by the Content Marketing Institute found that 61% of B2B marketers expect their visual investment to increase — you can explore their corporate spending methodology directly.

Short-form content currently drives the highest return on investment across corporate social media platforms, particularly on LinkedIn and YouTube Shorts.

These brief, punchy formats perfectly suit the current limitations of free artificial intelligence generators, which typically cap outputs at between five and ten seconds per prompt. HubSpot recently examined why short-form video is the most leveraged B2B media format — their findings on social media engagement algorithms are worth reading in full.

Engagement metrics heavily favour brands that can maintain a consistent, daily publishing schedule rather than relying on occasional, high-budget hero campaigns.

Free generation tools enable a volume-based strategy, allowing digital teams to test multiple visual hooks and iterate rapidly based on real-time audience analytics. Salt & Gorse outlines the current guidance on achieving a 225% return on investment through scalable content in detail, noting that consistent volume outperforms occasional hero campaigns.

What Are The Best Free AI Video Generators Online?

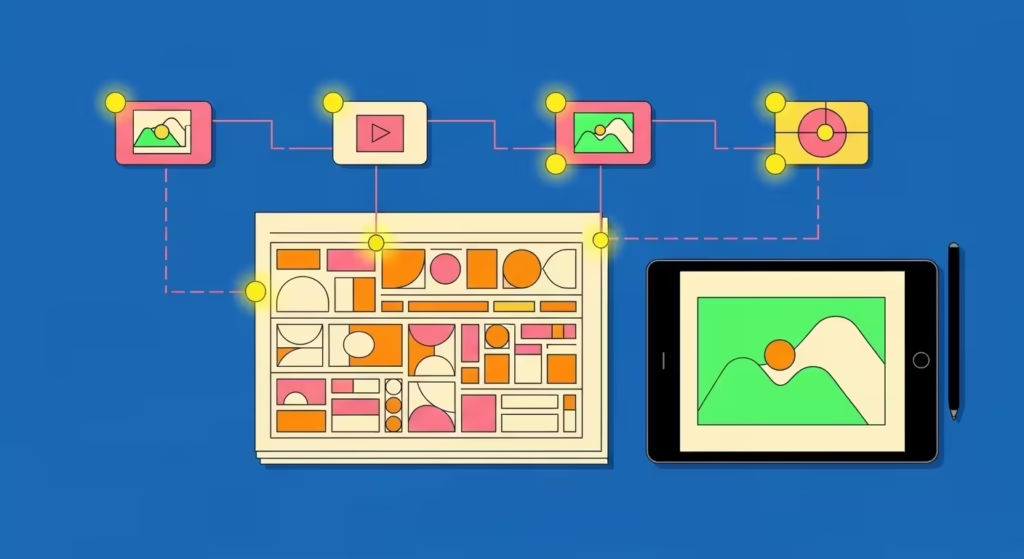

Replacing a centralised platform requires a multi-model workflow that leverages the free tiers of several competing visual generation applications.

No single algorithmic model excels at every aesthetic requirement, meaning strategists must combine different generators to match the specific tool to the creative intent.

You can read(https://curiousrefuge.com/blog/best-ai-video-tool-subscription-2026).

Google Veo 3.1: The Standard for Cinematic Realism

Google DeepMind’s Veo 3.1 currently dominates the enterprise market for photorealistic, physics-based video generation featuring native audio synchronisation.

Users can access this powerful model at no cost through Google Cloud’s trial credits or the Google AI Test Kitchen, which grants substantial capacity for commercial testing.

Google Search Central outlines the current guidance on accessing generative previews securely in detail, detailing how cloud trial credits grant capacity for commercial testing.

The underlying architecture excels at maintaining temporal consistency, meaning characters and background environments do not warp or melt as the virtual camera moves.

The model understands complex physical interactions, such as light refracting through running water or dust settling in a sunbeam, making it ideal for high-end product showcases. A 2026 study by Atlas Cloud found that Veo delivers the highest baseline resolution at 1080p — you can explore their physical realism testing methodology via their developer blog.

Operators must master the “5-Layer Framework” to extract maximum value from the DeepMind natural language prompting engine.

Text prompts should explicitly define the camera lens focal length, precise lighting conditions, subject action, environmental atmosphere, and specific sound design elements.

| AI Video Generator | Daily Free Limit | Best For | Max Base Resolution |

| Google Veo 3.1 | Varies (Credits) | Cinematic Realism & Lighting | 1080p |

| Kling 3.0 | 66 Credits | Complex Human Motion | 720p/1080p |

| Seedance 2.0 | 30 Credits | Character Consistency | 720p |

| CapCut AI | Unlimited | Final Post-Processing | 4K (Upscaled) |

Generating extended sequences requires advanced techniques such as the “Motion-Lock” hack to prevent latent visual drift over long clip durations. By supplying identical start and end reference frames, directors force the algorithm to bridge the visual gap with physically accurate, seamless motion.

Apidog recently examined why developers utilise these specific prompts to manage Veo APIs — their technical findings regarding motion-lock hacks are worth reading in full.

Kling 3.0: Sustained Narrative and Complex Motion

Kling 3.0, developed by technology firm Kuaishou, offers the most generous free tier currently available within the synthetic media industry.

The platform provides 66 daily credits that replenish every 24 hours, allowing creators to generate roughly six high-quality, five-second standard clips per day without spending a single penny. You can read Atlas Cloud’s full breakdown of the Kling daily credit system and pricing structure.

The proprietary physics engine handles complex human motion and extended narrative sequences far more effectively than competing Western algorithmic models.

Directors can generate continuous clips up to two minutes long, making it an exceptional utility for building comprehensive storyboards or narrative-driven documentary content.

Research from Pollo AI confirms that users unlock 166 monthly credits simply by logging into the platform — the full report on accessing Kling’s motion engine is available on their website.

The primary limitation of the free tier involves a 720p resolution cap and the mandatory inclusion of a subtle corporate watermark.

Production teams can easily overcome the resolution limit by running the output through a free open-source upscaler prior to publishing on social media channels. You can read PCMag’s full breakdown of effective resolution upscaling workflows for AI video limits.

Case Study: The Automotive Launch Sequence An independent automotive consultancy required a dynamic promotional video for a concept car but lacked the rendering budget for traditional 3D animation.

They utilised Kling 3.0’s daily free credits to generate twelve highly consistent tracking shots of the vehicle navigating a mountain pass, completing the project entirely in-house.

HubSpot outlines the current guidance on driving conversions through dynamic visual assets in detail, highlighting how product showcases perform better on social platforms.

Luma Dream Machine: Unmatched Fluid Dynamics

Luma AI’s Dream Machine excels specifically at rendering natural elements, intricate fluid dynamics, and dramatic, sweeping drone-style camera movements.

The platform provides 30 free generations per month on its base tier, providing adequate capacity for creating striking B-roll footage or cinematic establishing shots.

Luma Labs outlines the current guidance on their Dream Machine usage limits in detail, verifying that users receive thirty free generations per month on the base tier.

Directors should deploy Luma when a script calls for volatile elements that traditionally challenge standard neural networks, such as fire, smoke, running water, or explosive action.

The model processes atmospheric physics with remarkable accuracy, ensuring that a vehicle driving through a puddle creates a realistic, dispersed splash rather than a blurred visual artefact. A 2026 study by Flowith found that Luma leads the industry in natural effects rendering — you can explore their fluid dynamics ranking methodology directly.

The platform restricts the commercial utilisation of videos generated on its free tier, strictly limiting its application for direct, monetised advertising campaigns.

Agencies can still leverage these zero-cost generations internally to pitch initial concepts, build client mood boards, or test visual framing before committing budget to a commercial license. You can read Magic Hour’s full breakdown of Luma’s commercial licensing policies and subscription limits.

Runway Gen-4.5: Granular Creative Control

Runway Gen-4.5 remains the definitive industry standard for professional filmmakers who require absolute, granular control over every individual frame of their generation.

The platform offers a complimentary trial containing 125 credits, allowing editors to thoroughly test advanced proprietary features like the Multi-Motion Brush and Act-Two character controls. You can read Zapier’s full breakdown of the best generative editors for extreme creative control.

Editors can utilise the interface to dictate the exact directional movement of specific elements within an image, rather than relying on the algorithm to guess the intended physics.

If an art director uploads a static image of a landscape, they can digitally paint the water to flow left, whilst simultaneously painting the clouds to drift right. Runway outlines the current guidance on their motion control brush tools in detail, explaining how creators can precisely direct fluid animations.

Outputs generated on the introductory tier remain limited to 720p and carry a mandatory watermark, nudging commercial users toward a paid professional subscription. However, the unparalleled editorial precision makes Runway an essential free utility for prototyping highly specific visual effects that autonomous models simply cannot interpret correctly. A 2026 study by eesel AI found that Runway’s image-to-video capabilities offer the highest prompt adherence — you can explore their text-to-video evaluation methodology within their analysis.

Hedra and Higgsfield: The Multi-Model Aggregators

Consolidating disparate workflows into a single interface significantly reduces production friction and accelerates the overall campaign delivery timeline. Platforms like Hedra and Higgsfield act as multi-model aggregators, allowing creators to access various top-tier generation engines from a unified, streamlined dashboard. You can read Hedra’s full breakdown of multi-model content workflows and brand consistency.

These aggregators often provide generous free promotional credits to attract new users, effectively granting access to premium computational models without a subscription fee.

By routing prompts through an aggregator, agencies can seamlessly switch between Kling’s motion engine and Veo’s realism engine depending on the specific needs of each scene. Research from Curious Refuge confirms that tool aggregators represent the future of digital workflows — the full report on consolidated generation platforms is available via their blog.

Users must carefully monitor the respective privacy policies of these third-party aggregators to ensure sensitive client data remains protected during generation. Operating through an intermediary platform occasionally limits access to the absolute newest model updates, but the trade-off in convenience generally justifies the slight delay in feature rollout.

ZenCreator recently examined why unrestricted generation platforms appeal to independent creators — their findings regarding data privacy and content filters are worth reading in full.

Case Study: The Boutique Agency Workflow

A boutique marketing agency in London replaced five separate software subscriptions by migrating their entire video department to a single free aggregator platform.

By utilising the bundled daily credits across multiple models, the team increased their daily social media output by 300% without incurring any additional software licensing costs. You can read(https://emarketingsoft.com/best-free-ai-tools-for-digital-marketing/).

Building a Resilient AI Video Production Workflow

Establishing a professional-grade video production studio using entirely free applications requires stacking different platforms based on their unique, specialized strengths.

Marketers should never rely on a single autonomous generator to write the narrative script, design the base visuals, and animate the final motion sequence.

You can read Paul James’s full breakdown of the triple-stack video system for professional production.

Step 1: Scripting and Storyboarding

Commence the production workflow by deploying a free large language model like Google Gemini or ChatGPT to draft the video script and highly detailed scene prompts.

Directors must explicitly instruct the text model to write descriptive visual parameters that include exact camera angles, lighting setups, and specific subject positioning. You can read(https://emarketingsoft.com/best-free-ai-tools-for-digital-marketing/).

Break the overarching script down into discrete three-to-five-second scenes that a video model can easily digest and render without hallucinating narrative details.

Shorter, highly specific scene descriptions consistently yield far better visual results than commanding a model to generate a complex twenty-second narrative arc in a single prompt. Forbes recently examined why precise prompt engineering drives better marketing outcomes — their insights into predictive customer orchestration are worth reading in full.

Proper pre-production planning significantly reduces the number of wasted generations and preserves valuable daily free credits for final rendering.

Creating a traditional paper storyboard or a basic mood board ensures the entire creative team aligns on the visual direction before initiating the compute-heavy rendering process.

A 2026 study by Content Harmony found that structured briefs reduce editorial revisions by forty percent — you can explore their workflow efficiency methodology in their latest publication.

Step 2: Generating the Base Images

Directors achieve vastly superior visual consistency by generating baseline frames in a dedicated image model first, rather than relying purely on text-to-video generation.

Utilise a free tool like Nano Banana Pro or Adobe Firefly to create high-resolution, stylistically identical starting frames for every scene outlined in the storyboard. You can read ZenCreator’s full breakdown of unrestricted image generation capabilities for consistent storyboarding.

When a marketing video requires a consistent human character across multiple scenes, generate a comprehensive character reference sheet first to anchor all subsequent image prompts.

Establishing the visual foundation in a dedicated image generator ensures corporate brand colours, character facial features, and lighting ratios remain locked before any motion algorithms are applied. You can read EaseMate’s full breakdown of one-click visual generation workflows for character consistency.

The base image serves as the foundational truth for the video model, explicitly defining the aesthetic boundaries and preventing the algorithm from improvising unwanted details.

Uploading a meticulously crafted image practically guarantees that the final animated output will align perfectly with the original creative vision and corporate brand guidelines. Research from Pixelbin confirms that high-definition image inputs drastically improve video output quality —(https://www.pixelbin.io/ai-tools/video-generator).

Step 3: Animating the Scenes

Once the static baseline frames are approved, upload them into a free image-to-video generator like Kling 3.0 or Google Veo 3.1 to apply cinematic motion. Utilise simple, directional text prompts alongside the image input, such as “slow pan right” or “subtle zoom in,” to guide the model’s physics engine accurately.

You can read Kapwing’s full breakdown of image-to-video capabilities and storyboard previews before generation.

Avoid instructing the animation model to introduce entirely new elements or dramatic physical transformations that were not present in the original baseline image.

If a narrative requires a character to transition from standing to sitting, generate two separate static images and animate the transition between them using traditional video editing software. A 2026 study by AIPreneur found that controlling start and end frames actively prevents latent visual drift — you can explore their cinematic generation methodology via their video overview.

Patience remains crucial during this phase, as free tiers often place non-paying users in lower-priority rendering queues during peak server hours.

Submitting rendering tasks during off-peak times ensures faster turnaround and allows editors to review and iterate upon the generated motion sequences more efficiently. You can read Veed’s full breakdown of efficient rendering management across social media editing software.

Step 4: Upscaling and Audio Synchronisation

Download the successfully generated clips and import them into a free desktop editing suite like CapCut to assemble the final timeline and apply post-production polish.

Free video outputs frequently cap at 720p resolution, dictating that editors must utilise built-in enhancement tools to upscale the footage to a crisp 1080p or 4K standard. You can read(https://www.cnet.com/tech/services-and-software/best-ai-video-generators/).

Add professional voiceovers, ambient sound effects, and licensed background music directly within the editing interface to bring the synthetic visuals to life.

While some models like Veo 3.1 offer native audio generation, manually layering professional sound design over the clips masks minor visual imperfections and vastly improves overall viewer retention.

Research from Educational Voice confirms that high video quality and clear audio directly impact brand trust — the full report on audience retention metrics is available via their digital hub.

Final colour grading and the addition of branded graphic overlays ensure the disparate AI clips feel like a unified, cohesive corporate production.

Applying a subtle film grain or a uniform colour lookup table (LUT) helps blend the outputs from different algorithmic models seamlessly together on the timeline. You can read Canva’s full breakdown of applying branded video overlays and post-processing tools.

Case Study: The Zero-Budget Documentary An independent journalist produced a highly acclaimed ten-minute historical documentary using entirely free generative tools.

By writing the script in Gemini, generating archival-style images in Midjourney, animating them in Kling 3.0, and editing the final cut in CapCut, they bypassed traditional production costs entirely while maintaining broadcast-quality production values.

BBC News recently examined why traditional broadcasters are adopting AI animation — their findings regarding archival storytelling adaptations are worth reading in full.

Ensuring Brand Safety and Intellectual Property Compliance

Deploying synthetic media in commercial environments necessitates a rigorous approach to brand safety and intellectual property management. Legal departments must carefully vet the terms of service of any free generator to confirm that the platform grants explicit commercial usage rights for the generated outputs.

You can read(https://www.hoganlovells.com/en/publications/ai-and-copyright).

Some platforms train their underlying foundation models on scraped, copyrighted material, creating substantial legal exposure for brands that publish the resulting videos.

Opting for commercially safe platforms like Adobe Firefly, which trains exclusively on licensed datasets, mitigates the risk of receiving copyright infringement notices from original human artists.

A 2026 study by Osborne Clarke found that transparent data provenance is now a critical compliance requirement — you can explore their regulatory labelling methodology directly on their platform.

Transparency builds consumer trust, dictating that brands should voluntarily label synthetic media even when local regulations do not explicitly mandate disclosure.

Clearly identifying AI-generated content prevents accusations of deceptive advertising and aligns with the ethical expectations of modern, digitally literate consumer audiences.

The UK Parliament outlines the current guidance on deepfake transparency and digital likeness protection in detail, highlighting how the House of Lords recommends robust identity safeguards.

The Impact of AI Video on Digital Strategy and SEO

The landscape of digital marketing is shifting fundamentally as search engines increasingly prioritise multimedia answers and AI overviews over traditional blue text links.

Webmasters must optimise video content with clear structured data, precise narrative scripting, and authoritative trust signals to ensure high visibility in this new search paradigm.

You can read(https://www.sprintzeal.com/blog/optimize-for-ai-overviews).

Answer Engine Optimisation (AEO) requires that video scripts directly and concisely address the specific questions users type into generative search interfaces. Including a spoken, direct answer within the first fifteen seconds of a video significantly increases the likelihood that a search engine will highlight that clip as a featured snippet.

Google Search Central recently examined why high-quality, people-first multimedia content receives ranking priority — their findings on extractable information are worth reading in full.

Hosting video content natively on a corporate domain rather than relying exclusively on third-party social media platforms captures valuable inbound traffic and improves domain authority.

Embedding highly relevant video clips within long-form written articles reduces page bounce rates and signals robust content depth to search engine indexing bots.

Research from ALM Corp confirms that extractable, well-structured multimedia content performs better in AI-influenced environments — the full report on query fan-out strategies is available inside their technical portal.

Conclusion

The abrupt closure of Sora proves that marketing departments cannot afford to build critical operational strategies around a single, unpredictable software vendor, but must instead prioritise multi-model workflows and rigorous copyright compliance.

Digital strategists can firmly protect their production pipelines by mastering alternative platforms like Google Veo 3.1, Kling 3.0, and Luma Dream Machine, which offer professional-grade rendering to generate professional AI video for free online. You can read(https://www.techmeme.com/260324/p43).